This post provides an in-depth analysis of the lessons we learned while protecting Gmail users and their inboxes. We felt it was about time to share the key lessons we learned the hard way while protecting Gmail for over a decade, so everyone involved in building an online product can benefit from them. To that effect, with the help of various Gmail safety leaders and long-time engineers, I distilled these lessons into a 25-minute talk for Enigma called Lessons learned while protecting Gmail. While such a short talk is great at providing an overview, it forces you to leave out details that provide deeper insights. This blog post is, therefore, meant to fill this gap by sharing a more complete explanation for the lessons that need one and it complements my talk on the subject.

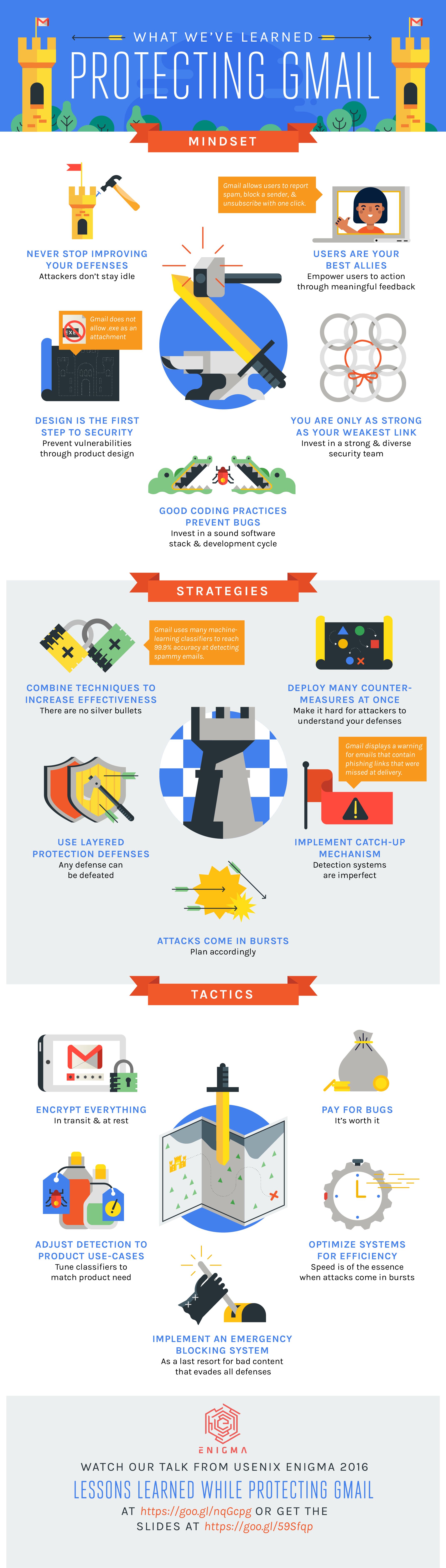

If you haven’t watched it yet, you will find the video of the talk video right below, the slides here and an infographic that summarizes the lessons at the end of this blog post.

Organization of lessons

While not explicitly stated during the talk but represented in the infographic, I divided the lessons into three broad areas that conceptually apply to different aspects of product security. We found out that thinking in terms of those areas helps us to focus on what we can do to improve security by providing the right framing. These areas are:

-

Mindset: How do we approach security? What are the fundamental principles that we build our security on? One of them, for instance, is investing in a strong and diverse security team.

-

Strategies: What are the medium-term and long-term design choices and approaches that shape how Gmail is constructed? For example, one of our core strategies is to make sure that the Gmail email processing pipeline is resilient to bursts, since spam and malware campaigns come in huge waves.

-

Tactics: These lessons are focused on a specific aspect of security and how to deal with it. For example, the tactic pay for bugs is our playbook response on how to deal with external reports: we not only welcome them but encourage them through financial rewards because they are very helpful in making Gmail more secure.

For the talk, it was helpful to think in terms of these three areas because that forced us to keep only five key lessons for each, which resulted in a very clear, structured and actionable talk that ended up being super well received.

Mindset

Design is the first step to security Prevent vulnerabilities through product design Making a product or feature secure requires thinking about it as early as the design phase, and not retrofitting it when the product is about to launch or, worse, after the launch. Being secure by design is very important because it is easier not to build a feature than to remove one from the users or secure something that is not needed. For example, Gmail by design doesnt allows Windows executable files because in the design phase we asked ourselves the question: Do we need to allows Windows executable files as they allow malware to be installed with one click? In hindsight, that might seem like an easy question, but 12 years ago, every mail provider allowed them. So we went back and forth, but in the end we decided that the risk and complexity of allowing executable attachments was not worth it. We received very few complaints and a lot of praise for this choice, in part because we were upfront about it and didnt have to backpedal by taking this feature away from our users. Bottom line: When building a product or a new feature, always ask yourself if you need to support use-cases that you know are problematic from a security standpoint. They might not be needed to ensure product growth and can always be added later if needed.

Users are your best allies Empower users to take action through a meaningful feedback UI Users have a vested interest in helping to keep a product clean because they are using it. Drawing on their willingness to help is one of the best ways to scale your abuse-fighting capabilities, as they can spot quickly very subtle forms of abuse (e.g. targeted spam). Gmail greatly benefits from our users telling us a message is (or is not) spam, as it helps us to build better mail classifiers. Bottom line: Spending time building tools and UI to allow your users to fight abuse is a good investment, as users have a vested interest in helping you and this approach scales with the number of users.

Strategies

Combine techniques to increase effectiveness There are no silver bullets The hard truth is that no system or algorithm is perfect and even the most advanced machine-learning algorithms produce false positives and false negatives. The way to deal with imperfect systems is to combine them to improve detection and to overcome their limitations. Combining judgements is hardly new: the mathematical foundation behind combining imperfect systems (ensemble learning), called the Condorcet jury theorem, was devised in 1785 to help make justice fairer and led most modern justice systems to rely on a set of jurors (each with imperfect judgement) to assess if a person is guilty or not instead of a single judge, as doing so is mathematically more accurate. Bottom line: If you have two ways to detect the same thing, dont choose: implement both and combine their results to make your system stronger.

Any defense can be defeated Use defense in depth with multiple layers of protection Since no combination of detection systems at a given layer is perfect, there is a need to add multiple layers of defense to make it even harder for attackers. For example, Gmail implements defense in depth to prevent content injection in HTML-based email. An email is scanned on the server by a set of analyzers, then it is processed on the client in case something was missed and finally we use CSP (Content Security Policy) to ask the browser to block anything that has escaped us. Each of those three layers uses a distinct set of technologies and the analysis occurs at different stages, which makes it harder for attackers to probe. Bottom line: While having a single big choke point for defense is appealing and easier, in the long run, a better but more burdensome strategy is to implement defense at every step to ensure that circumventing your defenses is hard and expensive for attackers.

Detection systems are imperfect Implement catch-up mechanisms Once you have acknowledged that no system is perfect, the next logical step is to devise catch-up mechanisms to mitigate the errors made. For example, in Gmail it (rarely) happens that we don’t catch right away that a link in an email leads to a phishing page. To catch up with these miss-detections, we can display a red banner on top of the email to warn the user of the risk. Bottom line: Think about and build catch-up mechanisms to mitigate mistakes made by defense systems. Attacks come in bursts Plan accordingly Surprisingly quite a few people assume that online services are facing a steady stream of attacks, but they couldn’t be farther from the truth. What we observe is a huge burst of malware and bad emails followed by a period of calm. Sure, there are always some attacks but the bulk of attacks are made in huge spikes of activity that occur at unpredictable intervals. Seeing 40 times more attacks than the previous day is not uncommon at all. This forces the defense to be designed in a way that it is able to absorb bursts. This type of design is drastically different from a user-oriented system that has sustained traffic and (most often) predictable growth. Not being able to use historical data to predict future usage is one of the hidden and challenging problems of designing security systems. Bottom line: When designing defenses, keep in mind that they will face large bursts and make sure they will be able to cope with them.

Make it hard for attackers to understand your defenses _ Use overwhelming force and deploy many countermeasures at once_ This is probably the most subtle of the lessons. Attackers constantly probe systems to find loopholes. For example, at some point one of Gmails spammers became very astute at finding bugs in our parsers and started to find very subtle bugs he could exploit. For example, he realized he could use the @ ambiguity (it is used in email addresses and in http links) to confuse our parsers and for a brief period of time he successfully evaded detection. This is why it is very important to make probing more difficult for attackers by rolling out multiple changes. That way they are overwhelmed by the number of things to test and can’t easily figure out what changed. Bottom line: When rolling out change in your defenses, don’t rush (too much) and release multiple changes at once.

Tactics

Implement an emergency system It is your last resort for bad content that has evaded all of your defenses What will hurt you the most is not what you have planned to defend against, but what you havent planned for. Our inability as humans to be able to predict and plan for those impactful events is called the black swan theory, in which rare events are called black swans. If you want to read more (and you should!) about this fascinating theory and its impact on all aspects of human life, read the eponymous book: The Black Swan: The Impact of the Highly Improbable. For security systems, the black swan theory means that the techniques attackers will use to bypass your system will be things you havent thought of and they will catch you by surprise. For example, until a few years back, Gmail didn’t forbid .lnk files because we didn’t think that attackers would be able to exploit them. It turns out that they were able to do so using Windows PowerShell, a technique common these days, and so later we had to ban them. In hindsight, it is obvious that we should have done so originally, but at the time Gmail was created (2002) that was not so apparent and PowerShell didnt exist. So while we couldn’t predict this attack, we had created an emergency system that quickly allowed us to block all .lnk files as soon as we discovered this issue. Bottom line: You can’t predict what an attacker will do and they will catch you off-guard. What you can do is to build emergency tools and set up processes quickly when something happens.

Adjust detection to product use-cases Tune your machine-learning classifiers to match your product needs When you make a statistical decision, you can err on one side of the decision or the other. For example, you can decide to detect more spam at the expense of flagging good mail as spam (false positives) or you can reduce the number of good emails flagged as spam at the expense of having more spam not detected (false negatives). How to balance the two (to a reasonable level, that is) is product specific. In the Gmail case, we consider that flagging a good email as spam and sending it to the spam folder is worse for our users than not detecting a spam message and letting it go to the inbox. The rationale behind this is that users rarely look in their spam folder and therefore may miss a good mail message that is potentially important whereas it takes only a swipe to remove or report a spam message that is in their inbox. Conversely for login risk analysis the classifiers are tuned to err on the side of cautiousness as it is way more costly for our users to let a phisher break in than to challenge them to make sure they are who they claim to be. Bottom line: When making a statistical decision, ask yourself on what side of the decision you want to err based on your product use-cases. If you have enjoyed this blog post, dont forget to share it on your favorite social network and let me know which lesson is your favorite. Here is the infographic that summarizes the lessons we learned: